Context

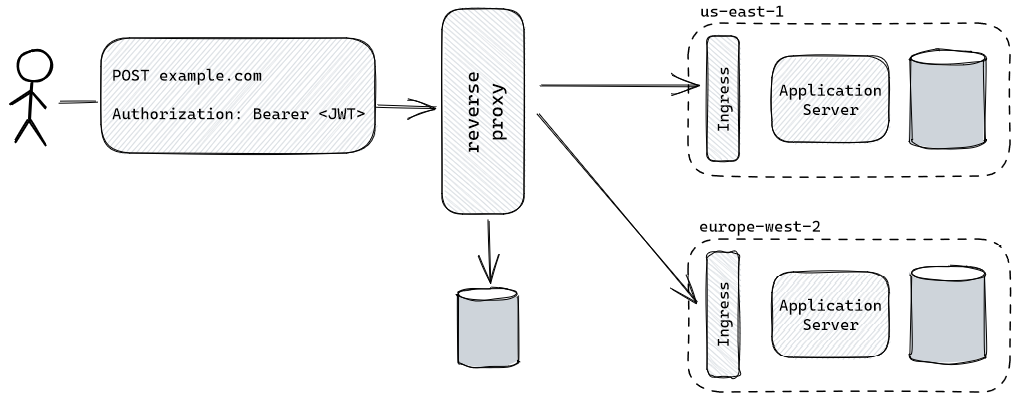

Traditionally, reverse proxies are configured with a static set of rules which determines the correct upstream/backend. When put in front of a sharded architecture, they might route traffic to the appropriate backend based on a subdomain (e.g., us-east-1.example.com) or a path (e.g., example.com/europe-west-2).

This can be particularly common if you have the same application deployed in two different jurisdictions (data and control plane). Most times it is enough to have customers use the unambiguous URL for interacting with an application - in those cases a global reverse proxy (or API Gateway) might even not exist.

However, sometimes it might be desirable (or necessary) to have a unique hostname that serves all customers. For example, you might want POST request to be sent to a short URL, using JSON Web Tokens for authorization. Or you might be creating a Github App that can only configure a single webhook URL to receive events.

In such situations, for every request, we need to look up the correct backend for that request based on its contents (headers, body, query parameters) before dispatching it. The static rules from traditional reverse proxies aren’t enough in this case.

Proposed Solution

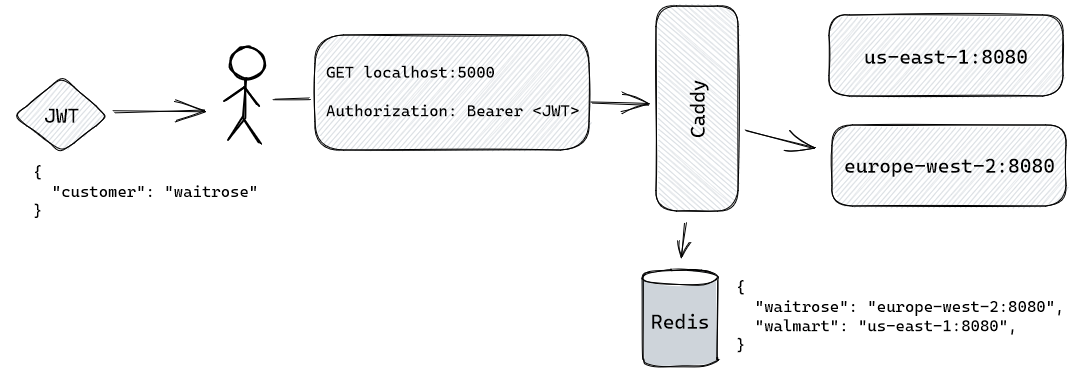

This can be solved quite easily with Caddy. Here are the components in our proof of concept:

- Two customers

waitroseserved by the European backendwalmartserved by the American backend

- Redis for storing the mapping between customers and backends

- Ruby and OpenSSL for generating a JWT

- Caddy as a reverse proxy layer

- Backend servers are simple Gin applications

First, we will populate our shard look-up table in Redis:

> SET walmart 'us-east-1:8080'

> SET waitrose 'europe-west-2:8080'

In this example, a request will be sent on behalf of customer waitrose. Since the customer information will be embedded in the JTW, we need to a way to generate a token. First, we will generate asymmetric keys (symmetric would also have worked):

$ openssl genrsa -out cert/id_rsa 2048

$ openssl rsa -in cert/id_rsa -pubout > cert/id_rsa.pub

Next, we leverage Ruby’s conciseness to generate the JWT:

require 'openssl'

require 'jwt'

priv = OpenSSL::PKey::RSA.new(File.open('cert/id_rsa'))

JWT.encode({customer: 'waitrose'}, priv, 'RS256')

Brilliant, we have everything we need to send a request. Next, we implement our own Caddy module that allows for the dynamic selection of a backend. Here’s a brief description of its behaviour:

- Intercept the request

- Decode the token under the

Authorizationheader using the Bearer schema - Look up the correct shard from Redis

- Save the shard information in a variable called

shard.upstream- this variable will be exposed in theCaddyfile - Enrich the request with an extra header

X-Customer(more on it later)

And the code:

func (m JWTShardRouter) ServeHTTP(w http.ResponseWriter, r *http.Request, next caddyhttp.Handler) error {

authHeader := r.Header.Get("Authorization")

tokenStr := strings.TrimPrefix(authHeader, "Bearer ")

claims := ParseJWT(tokenStr)

customer, _ := claims["customer"].(string)

r.Header.Set("X-Customer", customer)

shard, _ := rdb.Get(ctx, customer).Result()

caddyhttp.SetVar(r.Context(), "shard.upstream", shard)

return next.ServeHTTP(w, r)

}

Finally, we use the registered shard.upstream variable in our Caddyfile

{

order jwt_shard_router before method

}

http://localhost:5000 {

jwt_shard_router

reverse_proxy {

to {http.vars.shard.upstream}

}

}

Only the backend server left now. Since this is just a proof of concept, it doesn’t do much. It replies to requests coming to / and leverages the fact that Caddy has already decoded the customer from the JWT and put that information in the X-Customer header. Knowing the customer, it greets them in the response while including the shard name (provided through an environment variable) in the X-Shard header. This response from backend server demonstrates that the process works end-to-end.

func main() {

r := gin.Default()

r.GET("/", func(c *gin.Context) {

customer := c.Request.Header.Get("X-Customer")

c.Header("X-Shard", os.Getenv("SHARD"))

c.JSON(http.StatusOK, gin.H{

"message": fmt.Sprintf("Hello %s!", customer),

})

})

r.Run()

}

Time to test our POC. We spin up our patched Caddy server, Redis and the two backend servers:

$ docker-compose up

...

$ docker-compose ps

SERVICE COMMAND PORTS

caddy "/caddy run" 0.0.0.0:5000->5000/tcp

europe-west-2 "/upstream"

redis "docker-entrypoint.s…" 6379/tcp

us-east-1 "/upstream"

And issue the request:

$ http localhost:5000 -A bearer -a $WAITROSE_TOKEN

HTTP/1.1 200 OK

Content-Length: 29

Content-Type: application/json; charset=utf-8

Date: Sun, 12 Mar 2023 12:00:00 GMT

Server: Caddy

X-Shard: europe-west-2

{

"message": "Hello waitrose!"

}

Success! A full example is available on github.com/arturhoo/caddyshardrouter.

Why Caddy and Alternatives

I’ve chosen Caddy as it has been in my radar for a while for its focus on developer experience - as seen above, the dynamic selection of upstream servers was made possible in less than 80 lines of code. It has also had the opportunity to mature with the v2 rewrite.

Being written in Go allows us to generate a self-contained binary that can easily be placed in a distroless image. To further exemplify Caddy’s focus on devx, the xcaddy utility allows us to build a patched Caddy server with our module through a single command.

Here are some potential alternatives:

- OpenResty: powered by Nginx, writes custom Lua modules to be written.

- HAProxy: offers HAProxy Maps which coupled with the possibility of extending it with Lua might offer a compelling alternative.

- Kong: takes OpenResty one step further by facilitating the development of new Lua plugins. Is considered an API Gateway.

- Apache APISIX: also an API Gateway written in Lua. However, plugins can be written in Go and Python.

- Envoy Proxy: proxy powering Istio. Allows for dynamic configuration with custom control planes.